Boolean Dreams

Chapter 2 of Lady Lovelace's Objection: a history of computing from Ada Lovelace to large language models

“The design of the following treatise is to investigate the fundamental laws of those operations of the mind by which reasoning is performed.” — George Boole, An Investigation of the Laws of Thought, 1854

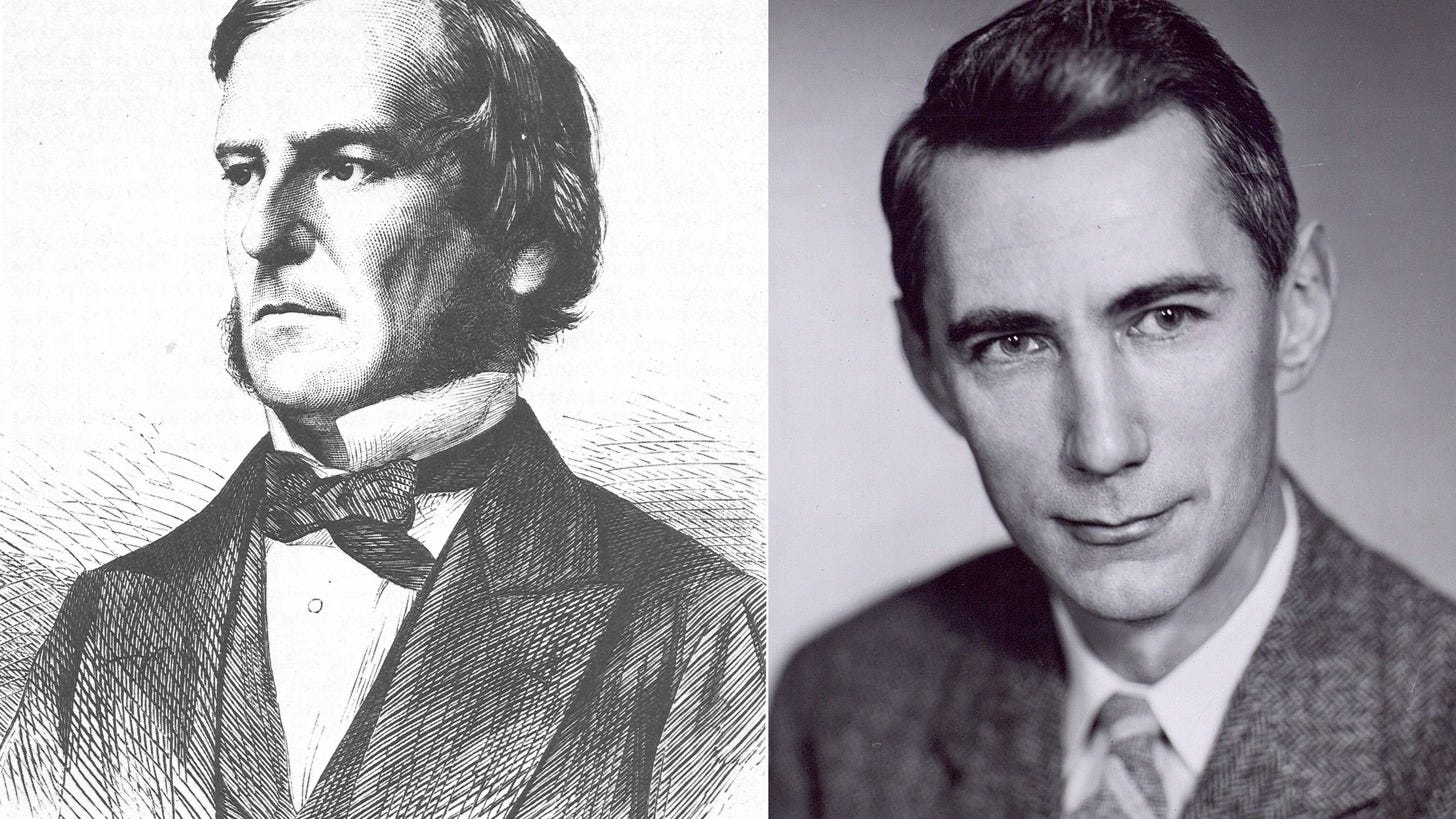

George Boole was born in 1815, the same year as Augusta Ada Byron, the poet’s daughter. One into aristocracy, under the scandal of her father’s name and the suffocating precision of her mother’s curriculum. The other into a cobbler’s shop in Lincoln, England. George’s father, John, made shoes for a living but telescopes for love. Together, as father and son, they built kaleidoscopes, cameras and microscopes, grinding lenses and assembling sundials. John Boole had a mind that often wandered to the curious instead of focusing on what paid. George inherited this tendency: the curiosity, the obsessive focus, and the poverty.

George grew up working class in a time when class dictated whether you were permitted to think for a living. He attended a handful of undistinguished schools. His father taught him the basics of mathematics. A local bookseller was said to have taught him Latin. Everything else he taught himself. Greek, French, German and Italian. By fourteen, he had translated a poem by the Greek poet Meleager and published it in the Lincoln Herald. The translation was so accomplished that a local schoolmaster publicly accused him of plagiarism. The accusation was not about the quality of the work. It was about the source. A cobbler’s son could not possibly know Greek.

At sixteen, George found himself the sole breadwinner for his parents and three younger siblings after his father’s shoe-making business finally collapsed. He took a teaching position at a school in Doncaster. He was not yet old enough to vote. He was already supporting four people. And at night, after the students retired to the dormitory and the schoolhouse was quiet, he opened his mathematics books and kept going.

He spent the next fifteen years teaching. He ran his own school by nineteen. The days belonged to the children, fees, lessons, discipline. The nights belonged to calculus. Working his way through Lacroix’s Differential and Integral Calculus, without a tutor or professor to guide him, a feat that would take Cambridge undergraduates years to accomplish even with expert tutelage. It took him longer. Of course it did. But he got there in the end. In his mid-twenties he was publishing original mathematical research and in 1844, at age twenty-eight, he won the Royal Medal from the Royal Society. This was the first time this prize had been awarded for mathematics. He still did not have a university degree. He had never attended a single university lecture.

Cambridge offered him a place. Not a chair, not a fellowship. A place as an undergraduate, but he declined. They wanted him to sit through years of course work he had already surpassed, and complete the standard curriculum like any other student. He was already writing mathematical literature that his would-be professors were referencing in their work. He stayed in Lincoln and kept teaching.

In 1849, Queen’s College Cork, a new university in Ireland, appointed him as its first professor of mathematics. He did not have a degree. His appointment was extraordinary and almost certainly controversial, but his standing in mathematics and the Royal Medal spoke for themselves. He was thirty-three years old, a self-taught cobbler’s son, holding a chair of mathematics, and he had an idea that struck him when he was seventeen, and had been forming ever since: that thought itself obeyed mathematical laws.

What Boole saw was so simple that the difficulty is not in understanding it, but in believing that no one had formalised it before.

Logic, since Aristotle, had been expressed in words. All men are mortal. Socrates is a man. Therefore, Socrates is mortal. The reasoning was a chain of sentences. And for two thousand years the logicians had analysed logic by analysing the structure of those sentences. They argued about categories, predicates and quantifiers. The arguments were conducted in prose, the prose was ambiguous and that ambiguity led to arguments that spanned centuries without resolution.

Boole asked: what if you stopped using words?

What he proposed was to replace the words with mathematical symbols. What if, instead of saying “all men are mortal,” you assigned a symbol to the class of all men, and another to the class of all mortal things? Could you then express the relationship between them as an equation? What if logic was not a branch of philosophy at all but a branch of algebra?

He published the idea in 1847, in a pamphlet titled The Mathematical Analysis of Logic. Seven years later he expanded it into his masterwork: An Investigation of the Laws of Thought, which was published in 1854. The title was not meant to be figurative. He meant it literally. He believed he had discovered the mathematical structure of reasoning itself.

Take a simple statement: “It is raining AND it is cold.” In Boolean logic, you do not need the words. Let R stand for “it is raining” and C stand for “it is cold.” These statements, now symbols, are either true or false and Boole represented true as 1 and false as 0. The AND in the original statement is just multiplication. R times C. If both are true (1 times 1), the result is 1: true. If either is false (1 times 0, or 0 times 1, or 0 times 0), the result is 0: false. AND is multiplication. That is all.

NOT is subtraction from 1. If R is true (1), then NOT R becomes false (1-1=0). If R is false (0), then NOT R is true (1-0=1). NOT simply flips the value.

OR is addition, with a caveat. If R is true OR C is true, the result is true. R plus C gives you the combined class, as long as R and C do not overlap.

But what if it is a cold rainy day? R is 1, C is 1, and the arithmetic gives 1 + 1 = 2. In plain English the statement is still true: at least one of “raining” or “cold” holds, so the answer should be 1. A cold rainy day belongs to both classes at once, and ordinary arithmetic has counted it twice. Boole himself was careful to add only classes that could not overlap. His successors cleaned up the rule by saying that in Boolean logic 1 + 1 = 1. The principle, however, was his.

Three operations. AND, OR, NOT. Multiplication, addition and subtraction from 1. With these three tools, applied to values that can only ever be 1 or 0, you can describe any logical statement, any chain of reasoning, any argument that Aristotle or anyone who came after him ever made. The entire armature of formal logic, twenty-three centuries of it, reduced to three operations on two numbers.

Boole saw something else. Something his contemporaries mostly missed. In his system, a peculiar law held: x times x equals x. Select all the red things from a collection; now select all the red things from the result. You get the same set. Selecting twice is the same as selecting once. In ordinary algebra, the only numbers that behave this way, x squared equals x, are 0 and 1. In Boole’s algebra, that was the only number there was. His variables could be nothing other than 0 or 1, yes or no, true or false. He had, without knowing it, invented a mathematical system built entirely on binary.

Binary itself was not new. The Indian scholar Pingala had described a binary system in the third century BCE, using light and heavy syllables where we use 0 and 1, to analyse the patterns of Sanskrit verse. The hexagrams of the Chinese I Ching, at least three thousand years old, are six-line binary figures. When Gottfried Leibniz published his binary arithmetic in 1703, he did so within a week of receiving a letter from a Jesuit missionary in China pointing out that the I Ching hexagrams already represented binary numbers from 000000 to 111111. Leibniz acknowledged the debt. The idea of expressing the world in two values was ancient and global, and so was the intuition that reasoning itself might run on them. The Yin and Yang of Chinese philosophy, the dualistic logic of Indian Nyaya tradition, the Boolean-like structure hiding inside the I Ching’s hexagram combinations: thinkers across Asia had been exploring the relationship between binary opposites and the structure of thought for millennia. What Boole added was not the insight but the algebra. He gave the relationship between logic and binary a formal, mechanical structure: precise rules that could be written as equations, simplified, and combined. It was that formalism, not the underlying intuition, that Shannon would pick up eighty years later and etch into circuits. The idea was old and belonged to no single culture. The notation that made it engineerable was Boole’s.

“The validity of the processes of analysis,” Boole wrote, “does not depend upon the interpretation of the symbols which are employed, but solely upon the laws of their combination.” It did not matter what the symbols meant, it mattered how they combined. You could use them for logic, for probability, for anything that could be reduced to classes and operations. The algebra was indifferent to its subject-matter. It was a machine for reasoning, and like Babbage’s engine, it did not need to understand what it was doing.

Almost nobody noticed.

Boole was respected in his lifetime, but not for this. His colleagues admired his textbooks on differential equations, which became standard references. His work on logic was acknowledged but was treated as a curiosity, a philosophical novelty that had no obvious practical application. There were no electrical circuits in 1854, there were no switches to flip, no relays to open and close. The idea that reasoning could be mechanised was interesting in the abstract, but there was nothing to mechanise it with. A few logicians took up the thread. William Stanley Jevons refined the system. Charles Sanders Peirce extended it. Ernst Schroeder systematised it in three dense volumes. But the work remained in the province of pure mathematics and philosophy, discussed in seminar rooms, irrelevant to engineers.

Boole himself did not live to see the modest recognition that came later. On 24 November 1864, he walked three miles from his home in Ballintemple to Queen’s College Cork in heavy rain. He delivered his lecture in soaking wet clothes. Pneumonia followed. His wife Mary, a practitioner of homeopathic medicine, believed like cured like, and also that the drenching had caused his illness. It is said, by their daughter Lucy, that she treated him by pouring cold water onto his bedsheets, on the theory that what had caused the illness would cure it. Whether the treatment hastened his death or simply failed to prevent it is debatable. The man who had reduced reasoning to true and false was undone by a proposition his own algebra would have handled briskly: like cures like. It does not. He died on 8 December 1864. He was forty-nine.

He left behind five daughters, all of them remarkable. Alicia taught herself to visualise four-dimensional geometry and coined the word “polytope.” Lucy became one of the first women to qualify in pharmaceutical chemistry in England. Ethel wrote a novel called The Gadfly that became one of the biggest bestsellers in Soviet history, with over two and a half million copies sold. She did not learn about the Soviet sales until 1955, when a Russian diplomat tracked her down in New York to tell her she was a literary hero in a country she had never visited. The cobbler’s son from Lincoln had produced, through his daughters, a mathematician who could see in four dimensions and one of the most widely read novelists of the twentieth century. Neither achievement had anything to do with Boolean algebra.

The algebra sat on the shelf. Eighty years: a solution with no problem attached. The problem arrived in 1937, in the hands of a twenty-one-year-old from Michigan who had no idea he was about to change the world.

Claude Shannon grew up in Gaylord, a small town in northern Michigan, the kind of place where a clever boy had to make his own entertainment. His father was a businessman and probate judge. His mother was the principal of the local high school. Shannon built model aeroplanes. He built a radio-controlled boat. He worked as a messenger boy for Western Union. In effect he earned his pocket money by carrying around other people’s information, which would turn out to be the most poetically appropriate summer job in the history of science.

His favourite project was a telegraph. He and his friend Rodney Hutchins strung barbed wire between their houses, four blocks apart, and rigged it to carry messages. Thomas Edison had done almost exactly the same thing as a boy, and Edison was Shannon’s childhood hero. Shannon later discovered that they were distant cousins. Both descended from a seventeenth-century colonial settler named John Ogden. The boy who idolised Edison and imitated his experiments would go on to do something more fundamental than anything Edison built, but he did not know that yet. He was just a kid who liked building things and wanted to talk to his friend.

He went to the University of Michigan in 1932, at sixteen, and graduated in 1936 with two bachelor’s degrees: one in electrical engineering and one in mathematics. This matters. Most people picked a side. Engineers built things. Mathematicians proved things. Shannon could do both, and the combination would turn out to matter more than either degree alone.

At Michigan, in a course on symbolic logic, he encountered the work of George Boole.

He arrived at MIT in the autumn of 1936, on a work-study arrangement that split his time between a master’s degree in electrical engineering and a job as a laboratory assistant for Vannevar Bush. Bush was a towering figure in American science, MIT’s vice president and dean of engineering, and the builder of the differential analyser, a room-sized machine that was, at the time, the most advanced calculator in existence.

The differential analyser was an analogue computer, a room-sized assembly of gears, wheels, pulleys, and rotating shafts designed to solve differential equations by physically modelling them. Variables were represented by the rotation of rods: a continuous range of values, not discrete digits. It was, in principle, a descendant of Babbage’s mechanical approach, though far more sophisticated. Shannon’s job was to help maintain and operate the machine.

But the part that caught his attention was not the gears. It was the control circuit. The analyser’s operations were directed by a network of about a hundred electrical relays: switches that could be opened or closed by an electromagnet. A relay had two states. It was either on or off. Current either flowed or it did not.

On or off. True or false. 1 or 0.

Shannon looked at those relays and saw George Boole.

Here is the insight, and like the best insights, it is obvious once someone points it out.

A switch is a physical thing that is either closed (current flows through it) or open (current does not). Call closed 1 and open 0. Now put two switches in a line, one after the other, so that current has to pass through both to reach the end. Current flows only if the first switch is closed AND the second switch is closed. If either is open, the circuit is broken. Two switches in series perform the AND operation. Multiplication. 1 times 1 is 1. Anything times 0 is 0.

Now put two switches side by side, so that current can flow through either one. Current reaches the end if the first switch is closed OR the second switch is closed OR both. Two switches in parallel perform the OR operation.

For NOT, use a switch that is closed by default and opens when activated. When the input is 1 (active), the output is 0 (the switch opens). When the input is 0 (inactive), the output is 1 (the switch stays closed). NOT is an inverted switch.

AND, OR, NOT. The same three operations Boole had defined in 1854, sitting in Cork, writing about the laws of thought. Except now they were not abstract symbols on a page. They were physical things you could build with wire and metal. Every Boolean expression could be implemented as a circuit. Every circuit could be described as a Boolean expression. The algebra and the engineering were the same thing, viewed from different directions.

Shannon wrote it up as his master’s thesis: “A Symbolic Analysis of Relay and Switching Circuits,” submitted to MIT on 10 August 1937. He was twenty-one years old. The thesis was forty-odd pages. It has been called, by the mathematician Herman Goldstine, “one of the most important master’s theses ever written.” Howard Gardner, the Harvard cognitive scientist, went further: “possibly the most important, and also the most famous, master’s thesis of the century.” Before Shannon, circuit design was an art. Engineers drew diagrams, tried combinations, relied on intuition and experience. After Shannon, it was a science. You wrote down what you wanted the circuit to do as a Boolean expression, simplified it using algebraic rules, and built the simplified version. Fewer switches, fewer components, same logic. The algebra told you what to build.

Boole had given the world a language for reasoning about truth and falsehood. For eighty years, nobody knew what the language was for. Shannon showed that it was for building machines.

The rest of Shannon’s career is a story for other chapters, but a few threads are worth noting here because they reach forward into the book.

In 1943, working at Bell Labs on wartime cryptography, he met a young Englishman named Alan Turing, who was visiting on classified business from Bletchley Park. They ate lunch together in the cafeteria every day, trading ideas over sandwiches while their security clearances kept them professionally separate. Neither knew the details of the other’s most important work. That story belongs to the next chapter.

In 1948, Shannon published “A Mathematical Theory of Communication,” which invented the field of information theory and introduced the bit as a unit of measurement. James Gleick, the science historian, called it more profound and more fundamental than the transistor, which was invented the same year. That paper is not this chapter’s story, but it rests on the same foundation: the idea that information can be expressed in binary, as ones and zeros, and manipulated according to formal rules.

And he never stopped building things. He rode unicycles through the corridors of Bell Labs while juggling. He owned a fleet of them: a standard unicycle, one without pedals, one with a square tyre, a unicycle built for two. He built a flame-throwing trumpet, a rocket-powered Frisbee, a computer that calculated in Roman numerals, and a mechanical mouse named Theseus that could learn to navigate a maze. In 1985, at a banquet of the International Information Theory Symposium, the chairman announced that Shannon was in the audience. The applause was so sustained that when he reached the stage he could not be heard. When the room finally went quiet, he said: “This is ridiculous.” Then he pulled three balls from his pocket and began to juggle.

He developed Alzheimer’s disease in the early 1980s. The mind that had defined information, that had shown the world how to measure and transmit and encode and decode it, began to lose its own. In his final years, in a care facility in Medford, Massachusetts, he still asked the nurses about juggling. He died on 24 February 2001, at eighty-four.

The thread that runs from Boole to Shannon is one of the cleanest in the history of ideas. A cobbler’s son in Lincoln, in 1854, proves that all of logic can be reduced to three operations on values that can only be 1 or 0. An engineer’s son in Michigan, in 1937, proves that electrical switches can perform those operations physically. Between them, they hand the world two things without which no computer can exist: a formal language for logic, and a way to build that logic in hardware.

Ada Lovelace had seen that Babbage’s engine could manipulate symbols according to rules. Boole formalised what the rules were. Shannon showed that the rules could be etched into circuits.

The next question was what, exactly, those circuits could compute. What were the limits? Was there anything a machine could not do, in principle, regardless of how cleverly it was built?

A young man in Cambridge had already answered the question. He had answered it in 1936, a year before Shannon’s thesis, by imagining a machine that did not exist and proving what it could do. His name was Alan Turing, and the answer would cost him everything.